In the recent post, I write about using codellama as our local version of code assistant.

CoLlama 🦙 - ollama as your AI coding assistant (local machine and free)

Paweł Ciosek ・ Jan 1

There was a sentence:

The extension do not support code completion, if you know extension that support code completion, please let me know in the comments. 🙏

You know what? I found a way to use llama to support code autocompletion! 🥳

As you guess 🤓 you need ollama installed locally, if you don't have, take a look to the previous post 🙏

So what we need:

- ollama model codellama:7b-code

- extension CodyAI -> https://marketplace.visualstudio.com/items?itemName=sourcegraph.cody-ai

Let's configure it together, step by step! 🧑💻

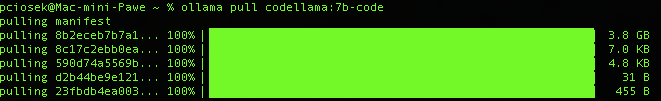

Download codellama:7b-code

Extension require this model, you cannot choose any other 🫣, but it is pretty good optimised to the job ⚙️

ollama pull codellama:7b-code

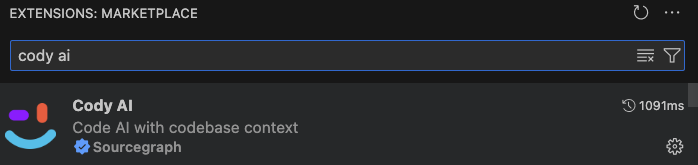

Install CodyAI extension

You gonna find it in an extension browser bar by searching "cody ai"

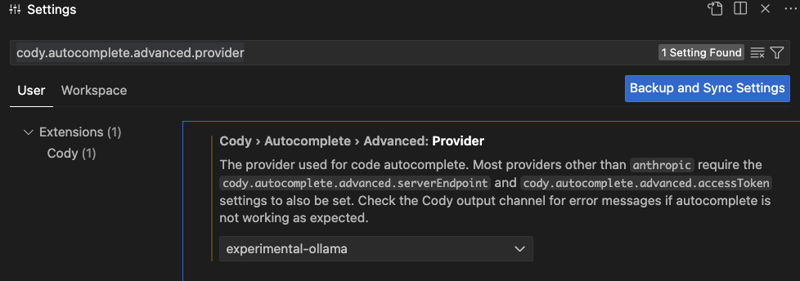

Configure CodyAI to use ollama as your companion

- go to vs code settings

- put inside search bar: cody.autocomplete.advanced.provider

- you should see the option

- set it to "experimental-ollama"

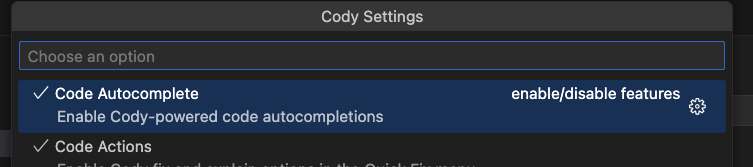

Make sure CodyAI autocompletion is enabled

- Click on CodyAI icon at the bottom right bar

- make sure option "Code autocomplete" is enabled

Make sure you are running ollama

That seems obvious, but it's worth reminding! 😅

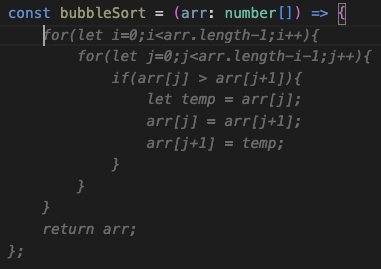

Test it!

Summary

So right now you can have ollama supporting you as chat assistant and with code autocompletion as well! 🤩

Locally, secure and free! 🆓

Did you try ollama as code companion? What do you think about it?