TL;DR

These days, any dev can build powerful things with AI.

No need to be a ML expert.

Here are the 7 best libraries you can use to supercharge your development and to impress users with state-of-the-art AI features.

These can give your project magical powers, so DON'T FORGET TO STAR & SUPPORT THEM 🌟

1. CopilotTextarea - AI-powered Writing in React Apps

A drop-in replacement for any react <textarea> with the features of Github CopilotX.

Autocompletes, insertions, edits.

Can be fed any context in real time or by the developer ahead of time.

import { CopilotTextarea } from "@copilotkit/react-textarea";

import { CopilotProvider } from "@copilotkit/react-core";

// Provide context...

useMakeCopilotReadable(...)

// in your component...

<CopilotProvider>

<CopilotTextarea/>

</CopilotProvider>`

2. Tavily GPT Researcher - Get an LLM to Search the Web & Databases

Tavily enables you to add GPT-powered research and content generation tools to your React applications, enhancing their data processing and content creation capabilities.

# Create an assistant

assistant = client.beta.assistants.create(

instructions=assistant_prompt_instruction,

model="gpt-4-1106-preview",

tools=[{

"type": "function",

"function": {

"name": "tavily_search",

"description": "Get information on recent events from the web.",

"parameters": {

"type": "object",

"properties": {

"query": {"type": "string", "description": "The search query to use. For example: 'Latest news on Nvidia stock performance'"},

},

"required": ["query"]

}

}

}]

)

3. Pezzo.ai - Observability, Cost & Prompt Engineering Platform

Centralized platform for managing your OpenAI calls.

Optimize your prompts & token use. Keep track of your AI use.

Free & easy to integrate.

const prompt = await pezzo.getPrompt("AnalyzeSentiment");

const response = await openai.chat.completions.create(prompt);

4. CopilotPortal: Embed an actionable LLM chatbot inside your app.

A context-aware LLM chatbot inside your application that answers questions and takes actions.

Get a working chatBot with a few lines of code, then customize and embed as deeply as you need to.

import "@copilotkit/react-ui/styles.css";

import { CopilotProvider } from "@copilotkit/react-core";

import { CopilotSidebarUIProvider } from "@copilotkit/react-ui";

export default function App(): JSX.Element {

return (

<CopilotProvider chatApiEndpoint="/api/copilotkit/chat">

<CopilotSidebarUIProvider>

<YourContent />

</CopilotSidebarUIProvider>

</CopilotProvider>

);

}

5. LangChain - Pull together AIs into action chains.

Easy-to-use API and library for adding LLMs into apps.

Link together different AI components and models.

Easily embed context and semantic data for powerful integrations.

from langchain.llms import OpenAI

from langchain import PromptTemplate

llm = OpenAI(model_name="text-davinci-003", openai_api_key="YourAPIKey") # Notice "food" below, that is a placeholder for another value later

template = """ I really want to eat {food}. How much should I eat? Respond in one short sentence """

prompt = PromptTemplate(

input_variables=["food"],

template=template,

)

final_prompt = prompt.format(food="Chicken")

print(f"Final Prompt: {final_prompt}")

print("-----------")

print(f"LLM Output: {llm(final_prompt)}")

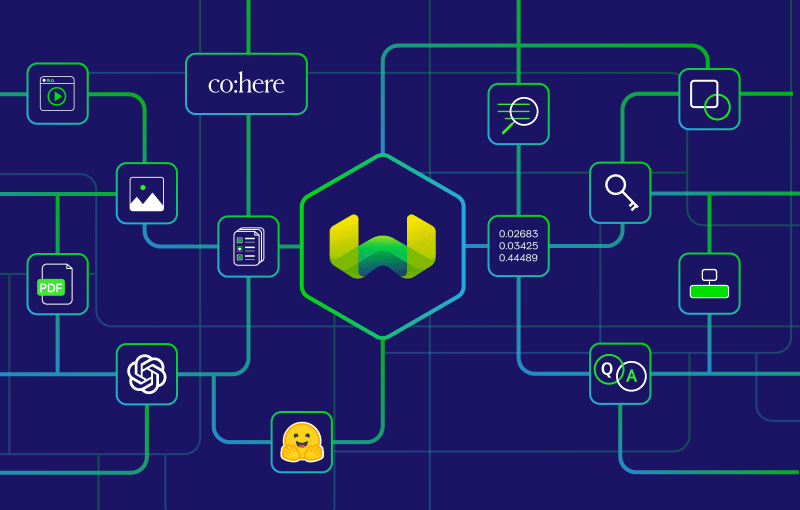

6. Weaviate - Vector Database for AI-Enhanced Projects

Weaviate is a vector database optimized for fast, efficient searches across large datasets.

It supports integration with AI models and services from providers like OpenAI and Hugging Face, enabling advanced tasks such as data classification and natural language processing.

It's a cloud-native solution and highly scalable to meet evolving data demands.

import weaviate

import json

client = weaviate.Client(

embedded_options=weaviate.embedded.EmbeddedOptions(),

)

uuid = client.data_object.create({

})

obj = client.data_object.get_by_id(uuid, class_name='MyClass')

print(json.dumps(obj, indent=2))

7. PrivateGPT - Chat with your Docs, 100% privately 💡

PrivateGPT allows for secure, GPT-driven document interaction within your applications, ensuring data privacy and enhancing context-aware processing capabilities.

PrivateGPT ensures privacy by locally processing and storing documents and context, without sending data to external servers.

from privategpt import PrivateGPT, DocumentIngestion, ChatCompletion

client = PrivateGPT(api_key='your_api_key')

def process_documents_and_chat(query, documents):

ingestion_result = DocumentIngestion(client, documents)

chat_result = ChatCompletion(client, query, context=ingestion_result.context)

return chat_result

documents = ['doc1.txt', 'doc2.txt']

query = "What is the summary of the documents?"

result = process_documents_and_chat(query, documents)

print(result)

[Bonus Library]. SwirlSearch - AI powered search.

LLM-powered search, summaries and output.

Searches multiple content sources simultaneously into integrated output.

Powerful for tailored in-app integrations of various data sources.

Thanks for reading!

I hope these help you build some awesome stuff with AI.

Please like if you enjoyed & comment any other libraries or topics you would like to see.